24 March 2016

Building our GPU Workstation

This is how we handle GPU rendering

At the end of 2015 we made an important decision. We switched from VRAYforC4D to Octane for Cinema 4D as our main renderengine. In addition to software issues we had another big problem. We had 16 CPU Renderslaves but only 6 GTX 780 graphics cards with only 3GB of RAM. It was clear we needed a big hardware update to use Octane for big print renderings and complex animations. It took a lot of research to find the right hardware for the right price. And like in the past the GPU workstation had to meet some requirements: A lot of power combined with a low noise level.

In this article we show you what hardware we took and the reason why we used exactly those parts. So let’s start!

First of all a listing of the used components with prices excl. VAT:

| CPU Intel Xeon E5-1620v3 4x 3.50GHz | 255,26 € |

| SSD 960GB Crucial BX200 | 203,58 € |

| SSD 250GB Samsung 850 Evo | 63,03 € |

| PSU 1200 Watt be quiet! Dark Power Pro 11 | 213,34 € |

| 4x DDR4-2133 regECC 8GB Samsung | 382,72 € |

| MB ASRock X99 WS Intel X99 | 265,87 € |

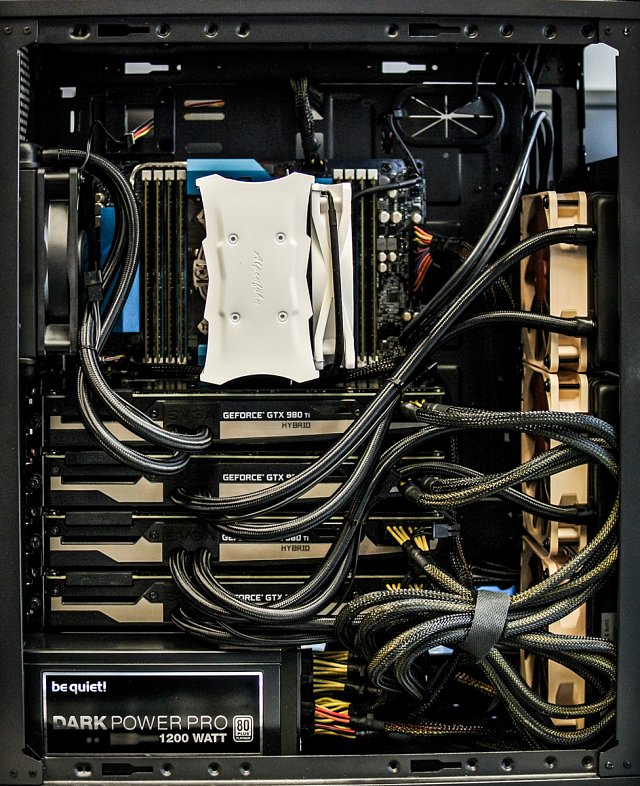

| 4x VGA EVGA GTX980 TI HYBRID 6GB | 2420,00 € |

| EKL Alpenföhn Matterhorn | 46,48 € |

| anidees AI8B Big Tower | 96,50 € |

| 4x Noctua NF-P12-1300 | 60,20 € |

| 4006,98 € |

Which GPU should we take?

The first and important thing in a GPU workstation is the graphics card. As we needed a lot of power we decided to go with 4 cards in one machine. It is the maximum amount you can attach in normal Big towers and on normal mainboards without using riser cards or custom build cases. We wanted an easy to build solution so we decided that 4 cards would be fine.

Although AMD has good valued and fast GPUs we needed a GPU supporting CUDA for Octane. So we needed a card supporting CUDA from Nvidia with enough memory. The GTX 980 Ti was the fastest card available at the end of 2015 and had 6GB memory. For our purpose the memory was enough and a TITAN with 12GB was not worth the money. Important to know is, that 6 GB GPU Memory can’t be compared with the RAM usage in heavy CPU-Renderscenes. You need a lot of polygons to run into the memory limit.

So after we decided, that we needed four GTX 980 Ti there was another hard decision: Which cooling solution is the best?

There are exactly 4 ways to cool a GTX 980 Ti:

- Reference Cooler

- Custom top blow Cooler

- Hybrid Cooler

- Watercooler

Custom Cooler

There are a lot of custom Coolers on the market which promise a low noise level. If you are using only one or maximum two cards with space in between in one case, those are a good choice. But when using four cards sandwiched together, we must strongly advise against those solutions. The reason is very simple. Those top blow Coolers suck air from below the card and blow it on top of the cooler which spreads the air to all sides. In our scenario that would mean that only the bottom card gets cool air. The rest just gets hot air from the cards below. We tested it with our old cards and 3 out of 6 cards broke up within half a year with the PC-case opened all the time.

Reference Cooler

As the custom Cooler were not suitable for our purpose we bought some cards with reference Cooler. They are by far the cheapest but also have the lowest clock. The big advantage of those cards is the airflow. It goes from front to back. So it sucks air from inside of the case and transports it back to the outside. Ideal for stacking multiple cards. But there was a big showstopper. The cooling is not very effective. That’s why you get very high temperatures after a while and this leads to another problem. If the cards reach around 90°C they get throttled to hold this temperature. In render intensive sessions this can lead to a massive speed decreases where 3 Cool cards have the same speed as 4 hot cards. To prevent this problem you can only speed up the GPU fan. But I swear to you that you won’t do this unless you have a separate room to place your PC in. It sounds like a hairdryer under the table.

Watercooler and Hybrid Cooler

There were only two options left for us because all the air cooled solutions didn’t meet the requirements for a low noise and powerful GPU-Workstation.

A custom watercooling was no option for us. We have no experience with those, they are expensive and not very practically in cases you need to change or replace your hardware. So we needed an all-in-one solution. At the time we built the workstation there were only Hybrid Cooler on the market what means that you have a watercooler on your GPU plus the normal reference air cooler for the rest of the shitty hardware we don’t care about.

There were only 3 hybrid cooled cards available, from which only 2 had the required height to be stacked together. So we took the fastest which was the EVGA GTX980 TI HYBRID.

The right Case

We like small workstations so we wanted our new GPU-Workstation to be as small as possible. Each GPU has its own radiator attached so we needed space for all of those. We looked for a lot of cases in all price ranges and ended up with a really small and cheap case with some cool features. The anidees AI8 is a really clean big tower. It is only 440mm in depth and all the cages inside can be removed easily. This was very important for us to attach three of the four radiators at the front and one at the back of the case.

Other cool features are an integrated fan control and two 2,5” slots at the rear panel.

Other components

Now we needed components which could handle four cards at once and 64GB RAM to be prepared for future. We looked for an X99 Chipset and took an ASRock X99 WS which had all we wanted and was not expensive. As the CPU speed was not so important we bought an Intel Xeon E5-1620v3 4x 3.50GHz with a pretty good single core speed.

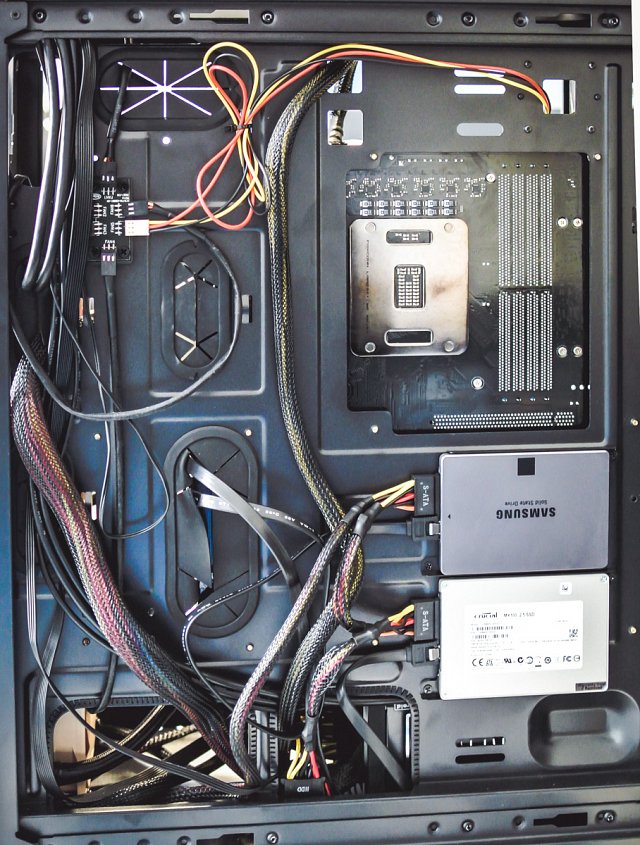

For Storage we stick to our two SSD drives: 256GB SSD for the operating system and applications and 1TB SSD for temporal projects storage.

Choosing the right Power Supply was not easy. You can read a lot on the internet about this and a lot of people recommend 1500 Watt when using four of this cards. We tried a 1200 Watt be quiet! Dark Power Pro and can say that it makes a very good, silent and cool job. We just have a simple flowmeter and this outputs just around 800 Watt under full power. We are no electricians here but what we can say is, that the workstation performs really well, is 100% stable and we had no problems during the last months with 24/7 renderjobs.

We also bought an EKL Alpenföhn Matterhorn CPU Cooler, 8x 8 GB Samsung M393A1G40DB0-CPB DDR4-2133 regECC DIMM CL15, Fan extension cables to connect the radiator fans to the Fan control and 4x Noctua NF-P12-1300 Fans to replace the GPU radiator fans.

Putting all together

After all the components arrived it was not really hard to put all those components together. We made the airflow from back to front so all the hot air will be transported right away and doesn’t stay below the table. Important in a small case is the cable management. We tried to manage most of the cables at the back of the case to keep the airflow in the case as good as possible. We also used a lot cable ties. The two 2,5” slots at the back for our SSDs are also really important, because there is no place left in the case due to the small dimensions.

The integrated fan control can be controlled with a small switch at the top side of the case. You can choose between “Full Speed”, “Half Speed” and “off”.

If you don’t use your GPUs the half speed setting is really silent and cools all the cards very good. When starting the rendering you should switch to “Full Speed”. The workstation will turn louder and it won’t be silent anymore but compared to air cooled solution this isn’t really annoying and it is constant. Speed controlled air cooler vary their speed in very short intervals, so does the noise, too. We invested the money in really good noctua fans, so the noise level is as low as possible.

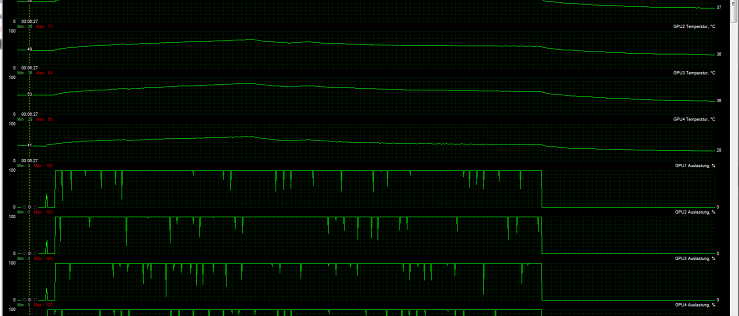

Benchmarks and temperatures

The most important thing for us were a lot GPU power, good cooling and easy to build. We accomplished the 3rd point but two were left.

So we made some Octane Benchmarks and got around 520 points without overclocking. After that we rendered a heavy Octane scene with the fans on “Half Speed”. The temperature slowly raised up to 84°C. We then switched to “Full Speed” and the temperatures lowered to around 65°C.

After a few month with this workstation we can say that even with a very hot surrounding temperature over 30°C the cards run at around 75°C which is still good enough to prevent throttling.

Conclusion

Overall we are very happy with this configuration. It costs around 4000€ excl. VAT, is easy to build and compared to other solutions it is really silent. But we are searching for hardware to improve. We are also testing a Gigabyte GeForce GTX 980 Ti Xtreme OC WaterForce at the moment which is completely watercooled and is a little bit faster. As the card is out of stock everywhere we need more time to test new configurations but as soon as we have an improvement there will be a new Blog post.

Mostyle

25 Mar 2016 - 04:40:29

Interesting read… Thanks for sharing …

harm van zon

28 Mar 2016 - 06:35:07

I would swap the Motherboard for the ASUS X99-E WS. Since it has two PLX PCI-Express 3.0 switches, so all cards would have 16x speed PCI-e and not 8x as they have now.

Joerg

29 Mar 2016 - 16:24:55

Our aim was a cost effective solution. So 120€ more for a few seconds faster prepare time? We think it isn’t worth it.

Peter

24 Apr 2016 - 11:20:13

Nice article! After reading here about the evga, I´ve bought the same. But it´s noisy as hell! The driver doesn´t seem to work well, to throttle the fan speed, depending on the gpu temperature. It runs alwas at 100%, even when I change it to quit mode. It makes a lot of noise! Did you have the same problem? How did you fix that?

Eddy

15 Jun 2016 - 13:20:40

Very interesting! Would you recommend Octane for architectual-, furniture-, or interrior-renderings? Or is there a quality-loss compared to V-ray?

Mattes

12 Jul 2016 - 10:39:33

Thanks for this article. Would this Setup also work with the new

EVGA GeForce GTX 1080 and will this need also cooling?

Noé Mbiendi

04 Aug 2016 - 11:56:19

I’m so excited to get the same engine that why right now i’m building that same machine for my use. so i dont know nothing about hardware that why i’m just doing like a robot but between those cards:

1 – EVGA GTX980 TI HYBRID & EVGA GTX 980 Ti SC+ACX2.0 (HYBRID) what is the difference and who can inter well in that box.

2 – Between this ASUS X99-E WS & MB ASRock X99 WS Intel X99 what did you recomend me ?

Thx and Regards.

sory for my English, i’m using octane forcinema 4d

Thorwald

03 Sep 2016 - 10:06:17

Great setup. Is there a better case to fit also hdd + ssd disks? any suggestions?